Mind The Trust Gap: When AI Enters Familiar Products

People don’t build new mental models for every system they encounter. They reuse the ones they already have. When someone interacts with an AI system for the first time, they treat it like they’d treat a person. They expect consistency. They expect it to do what it says. They expect it to not surprise them in bad ways. That worked reasonably well when systems had a clear scope. A booking platform books. A search engine searches. But right now, teams everywhere are integrating AI capabilities into these familiar products or transforming them entirely, and the trust users built over years doesn’t automatically extend to the new parts or reinventions.

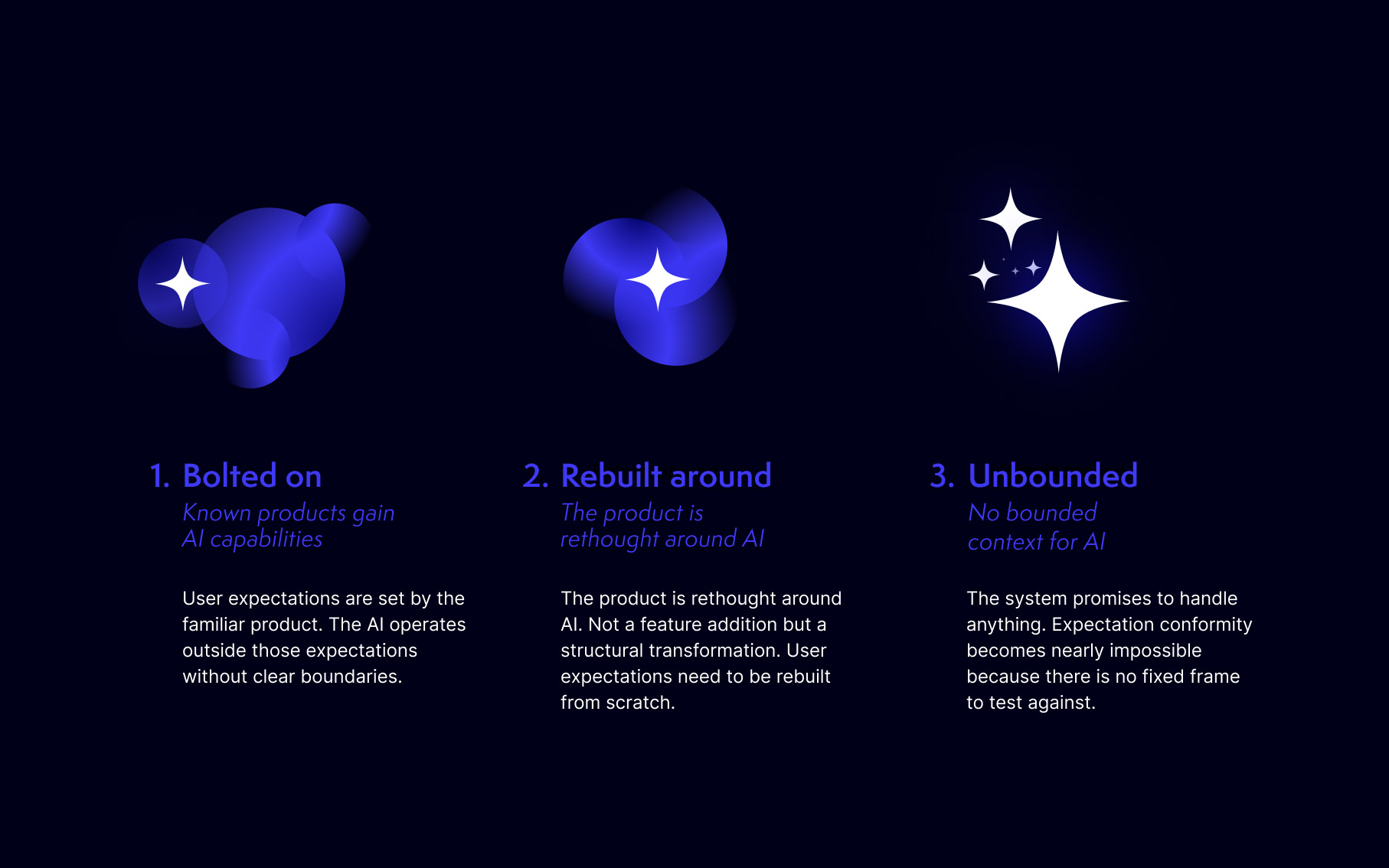

Think of a platform like Airbnb adding an AI layer to handle search and communication. The user’s expectations are anchored in years of using a product they understood. The AI operates outside those anchors. Nobody told them where the reliable part ends and the experimental part begins. This is happening everywhere. Established products integrate AI or reform their product around it. In form of shortcuts, assistants, generators. The pitch is: everything faster, everything easier. But the AI component is probabilistic where the product was deterministic. Confident where it should be uncertain.

As Sara Gold put it: »When generative AI fails, it’s often quite invisible, and when it does fail, it also can look like it’s working.«

In usability, there’s a term for what people expect: Expectation conformity (»Erwartungskonformität« in German). One of the ISO 9241 dialogue principles. A system should behave the way users anticipate based on their experience and context.

Simple enough when the context is bounded. But what happens when a bounded product suddenly contains a component that isn’t or transform into one without? The experience people bring is shaped by years of using a product they understood. The AI layer they’re now facing operates by different rules, and nobody drew the line between the two.

»When generative AI fails, it’s often quite invisible, and when it does fail, it also can look like it’s working.«

Sara Gold, CROSSING THE AI DEVIDE (2025).

The models we carry

I spent my bachelor’s thesis studying trust on peer-to-peer sharing platforms. Airbnb, Vinted, BlaBlaCar, that era. The trust models I used back then assumed two humans on either side of an interaction. The platform sat in the middle, mediating. But the trust was interpersonal. One person trusting another person, filtered through an interface. Those models worked. On a sharing platform, there really is a human on each side. Expectation conformity makes sense there because the system’s behavior maps onto social patterns people already know.

But then something happened that we didn’t properly account for. The systems themselves became the entity people had to trust. Not the human behind the product. Not the company operating it. The AI itself became the interaction partner. It’s making decisions about what you see, what you’re offered, what you qualify for.

And we kept using the same trust models. Not because someone tested whether they still apply. Because we didn’t have anything better.

Projecting human expectations onto machines

I’ve spent the last few years working on products that ran straight into this problem. Some added AI features. Others simply became more automated with each release. Doing things on behalf of users that users used to do themselves. The trust questions were the same either way. In parallel, I’ve been running workshops on human-centered algorithm design and discussing these patterns with peers at UX and interaction design conferences. The question keeps coming back: should we project human trust parameters onto these systems? Should we treat them like human interaction partners, with expectation conformity as the rulebook? The market is full of AI products shaped like people. Chatbots with names, avatars with personalities, virtual companions. The pattern works because projection works. But it’s not trust. It’s puppetry and they can’t live up to their promise.

Parts of that work. Consistency doesn’t need intentionality. An AI system that gives you comprehensible, stable results builds something that feels like trust. Or at least reduces anxiety. Predictability works the same way whether the trustee is a person or a machine.

But benevolence? The belief that the other party wants what’s good for you? That’s a human thing. An AI doesn’t want anything. It optimizes for whatever objective its designers set, and that objective may or may not have anything to do with your wellbeing. When careful UX design makes a system feel like it cares through warm language and gentle nudges, without the system actually being structured to protect you, we’ve crossed a line.

There’s a pattern the UX research community calls »Mother«. A system that wraps surveillance and control in the aesthetics of care. It feels trustworthy without being trustworthy. And expectation conformity, applied without thinking, produces exactly this. The system meets your expectations. It feels right. It operates in someone else’s interest.

This is a business problem. Products that mimic care without delivering it may drive initial adoption, but they don’t retain users. People notice when the promise doesn’t hold up. They might not articulate it as a trust issue, but they leave. Churn doesn’t always look like outrage. More often, it looks like quiet disengagement. A pattern that even the biggest players haven’t solved. And no amount of onboarding polish will fix a product that users have learned not to believe.

For designers, this changes the job. The product concept itself needs rethinking. What does this product do now? What should users expect from it? These aren’t interface questions. They’re strategic ones. And they take time. Iteration, synthesis, testing with real users. Most organizations skip that part. They ship the AI feature, polish the onboarding, and call it innovation. That’s marketing, not product work.

The difference between trust and trustworthiness

Gry Hasselbalch makes a distinction I keep coming back to. Trust is a feeling in the user. Trustworthiness is a property of the system. Can it be held accountable? Can it be audited? Does the user have real control? You can absolutely design for trust without trustworthiness. Dark patterns do it every day. But you can’t call that responsible design.

The uncomfortable flip side: trustworthiness without expectation conformity doesn’t reach anyone. You can build the most accountable, auditable, fair system in the world, and if it behaves in ways people don’t expect or understand, nobody will use it. Or worse, they’ll use it wrong.

So we need both. And we don’t have a clean model for how they fit together. Which means you have to find out what works. Not through theory alone – through building, testing, and iterating. Briefings and frameworks give you a starting point, but they won’t tell you where trust actually breaks in your product. Only real users will.

Which means you have to find out what works. Not through theory alone. Through building, testing, and iterating. Briefings and frameworks give you a starting point, but they won’t tell you where trust actually breaks in your product. Only real users will.

When accuracy becomes a trust question

A lot of the current HCI research focuses on transparency as the answer. Make the system explainable. Show the user how it works. That’s necessary, but it’s not enough, and it can be a distraction from harder questions. Like: how accurate does a system need to be? And who decides what “good enough” means?

I’ve worked on AI-supported projects over the past months where we started with poor accuracy and had to improve step by step. That’s normal. You don’t launch with 99% and call it a day. You iterate. But the question of how accurate is accurate enough is a trust question, not just a performance metric. It depends on what the user expects. An AI that helps you sort emails can afford to get things wrong sometimes. An AI that supports medical diagnosis can’t.

But the real problem isn’t low accuracy. It’s dishonesty about accuracy. There’s a pattern I see in many current AI products, especially out of the US: instead of saying “I don’t know,” the system takes a statistical guess and presents it with confidence. It’s right maybe half the time. The user can’t tell the difference between a confident answer and a coin flip dressed up as expertise. That’s not expectation conformity. That’s expectation manipulation. The system promises competence it doesn’t have.

There are two ways this falls apart. Either the user knows the domain well enough to spot when the output is wrong. Or they don’t, and they take the output to someone who does. A colleague. A client. And that’s when it gets embarrassing: the result sounded confident but turns out to be nonsense. The system didn’t just fail the user. It made them look bad in front of someone whose judgment they depend on.

Trustworthiness means being honest about your limits. And those limits depend on context. A writing assistant that suggests awkward phrasing is annoying. A diagnostic tool that guesses wrong can cause real harm. The threshold for “good enough” isn’t universal. It’s set by the field the product operates in, the stakes involved, and what users are relying on it for. If a system can’t deliver an accurate result within that context, the right design isn’t to hide that behind a polished interface. It’s to say so. To hand off to a human. To create that moment where the system steps back and the person steps in.

This is what I think augmented intelligence actually means. Not a system that replaces human judgment, but one that knows when to defer to it. Expert systems need a handshake between machine capability and human expertise. The intelligence is in the combination, not in the illusion that the machine has all the answers.

What I've learned (and what I haven't figured out)

After years of building products with algorithmic and AI-driven components, running workshops, and discussing these patterns at conferences, I don’t have a framework. What I have is closer to a set of observations from the field:

- People project human trust models onto systems.

That’s not a bug. It’s how we work. The question is whether we meet those expectations honestly or misuse them. - Expectation conformity without structural trustworthiness will cost you.

Users don’t stay with products that overpromise. They leave quietly, and they don’t come back. - AI systems don’t improve without a shared frame.

In every project I’ve worked on, real progress started once the team agreed on what accuracy means in this context and where the guardrails sit.

And here’s where I land, practically: you can’t theorize your way to trustworthy products. You have to test. Go to the field. Talk to customers. Let mental models form over time. The agile impulse to experiment and iterate is right, but it needs a companion question that most product teams don’t ask often enough: Are we building something that deserves the trust people are giving it? Trust is reciprocal. People give you their time, their attention, their data, their money. If they don’t get enough back, they stop. Not because they read your privacy policy or thought about ethics. Because the exchange stopped making sense to them.

We’re in a transitional period. The old trust models aren’t useless, but they’re incomplete. New ones will come, but they need time. In the meantime, people building these systems carry a specific kind of responsibility: use the tools we have, including expectation conformity, without pretending they’re enough.

The gap between »feels trustworthy« and »is trustworthy« is where the actual design work happens right now. Most of us are still figuring out how wide it is. I’m one of them.